Lithium-ion batteries are the new big thing in the power storage sector, surpassing lead-acid and nickel-cadmium batteries with their state-of-art design and revolutionary mechanism. It is used widely in multiple appliances and has established itself as the domineering powerhouse of rechargeable batteries. Here is a breakdown of how this particular battery functions, and why its capacity reduces over time.

Roles of Anode, Cathode, and Electrolyte in Charging and Discharging

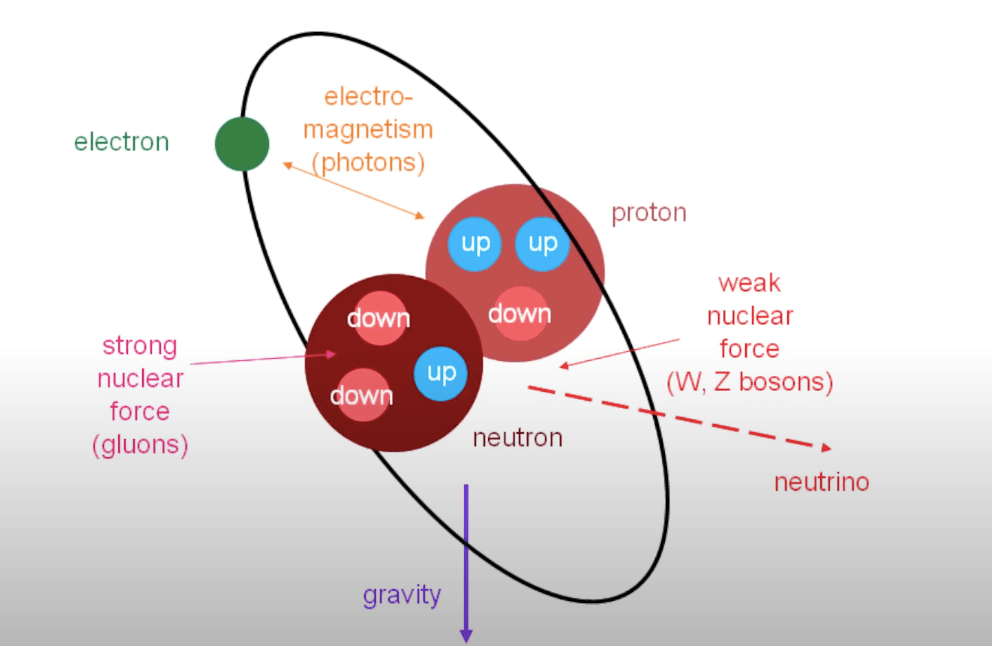

Like any other battery, the lithium-ion battery consists of an anode and a cathode. However, unlike what you may have learned in the electrochemistry chapter at school, this is no single-element electrode. In a lithium-ion battery, the anode (negatively charged electrode) is made of graphite with lithium ions intercalated in between its flat hexagonal rings, which are separated by a relatively large distance of 340pm. Essentially, graphite acts as a storage space to house lithium atoms.

It is already known that lithium, being an alkali metal, readily loses its outermost valence electron to form a lithium-ion (Li+). This occurs to the lithium atoms present in the graphite, and as they release electrons, the lithium atoms move out of the graphite. Subsequently, the released electrons will move out of the anode and into the circuit to various electrical components in the device, which require current.

Now let’s look at the cathode (positively charged electrode). There are different cathodes available, but for now, let’s take a cathode containing cobalt and oxygen: cobalt peroxide (CoO2). Cobalt will lose an electron, which is taken by oxygen, and so Co gets oxidized to Co+4. So now, the cobalt atom is missing an electron, which it wants back. Hence the released electrons from the anode will reach the cathode and satisfy the cobalt atoms growing shortage of electrons.

However as more and more electrons reach the cathode, the negative charge of the cathode increases, and since we know like repels like charges, electron movement will start to slow down. Another issue is that the Li+ ions also want electrons back (i.e. they want a balance of charges). This is solved, by adding an electrolyte with an organic solvent in between the anode and the cathode. The electrolyte is generally a lithium salt, such as LiPF6, LiBF4, etc, mixed with ethylene carbonate or dimethyl carbonate. The electrolyte allows only ions to pass through it, hence Li+ ions will pass from the anode to the cathode. Now the lithium-ion will wedge itself within the cobalt and oxygen structure of the cathode, forming lithium cobalt oxide. Remember that the electrons moving from anode to cathode externally will be gained by the cobalt ion (Co+4) and not the lithium-ion.

This describes the whole process of discharging. When you use your phone or laptop, the electrons move externally from anode to cathode, and lithium ions will move internally through the electrolyte from anode to cathode too. As this process keeps occurring, your battery percentage level decreases on your screen. When all lithium ions have moved to the cathode, and there is no external electron flow- that’s when your battery is dead and there is no power in your phone.

But phones and laptops are not use-once-and-throw devices. You can reuse them again and again and again. How is this done? Charging. When you plug your device into a USB charger, the charger provides electrical energy to the extent that it pulls electrons back from the cobalt atom and sends those electrons back to the anode. Lithium ions will also be kicked back to their initial home, back to the graphite structure of the anode. When all the electrons and lithium ions return to the anode, that’s when we say the battery is fully charged with 100%.

If you notice one thing, charging and discharging are exact opposites of each other, which lead to an interesting observation: lithium ions move back and forth through the electrolyte in this battery. It’s almost like a swing. That’s why lithium-ion batteries are also known as swing batteries.

Extra additions: Separator and Collectors

Now that we have understood the purpose of the three main components (anode, cathode, and electrolyte), and how they work together, let’s add a few more components to enhance this battery even further. Let’s start with the non-conducting liquid semi-permeable separator. This layer is lodged in the middle of the electrolyte and it prevents the anode and cathode from touching each other. In the case that such contact does happen, things can get explosive, as the reaction will accelerate uncontrollably. Next, are the collectors. To increase the conductivity of anode and cathode, a conducting collector plate is placed inside them. On the side of the graphite anode, a copper layer is placed and on the side of the cobalt peroxide, an aluminum layer is placed.

Why does a device’s battery capacity reduce over time?

Have you ever noticed that, over the years, your phone’s battery gets depleted faster? There are very simple reasons for this. The first possible problem is, that when recharging, incoming electrons and lithium ions can react with the electrolyte and organic solvent to form SEI (solid electrolyte interface). The formation of this compound permanently consumes lithium ions, making less of them available. Less the lithium ions, more quickly discharging happens.

The first cause of issues is not in your hands, but the second reason that I am about to disclose is. If you let a battery fully discharge until it is at 0%, or dead, you have essentially waited until all lithium ions reached the cathode side. Now here, some lithium ions will combine with oxygen directly to form lithium oxide and some cobalt atoms will combine with oxygen directly to form cobalt oxide. This again, is irreversible, leaving fewer lithium ions available for charging and discharging.

To prevent this from happening, it is recommended to charge your phone or laptop when it is already at 20-40%, and not wait for it to drop dead before you decide to plug it in for charging.